The Doc2KG Pipeline Is Now Functional

From Documents to Knowledge Graphs: The Doc2KG Pipeline Is Now Functional

After several months of thinking and development, I’m pleased to announce that the Doc2KG pipeline has reached an important milestone: a fully functional end-to-end version is now working.

For demonstartion check YouTube Vlog or check the details in Wiki on GitHub.

Doc2KG is a toolchain designed to convert normative documents - like official codes and standards - into a Knowledge Graph (KG) that can be queried, analysed, and enriched using modern graph and AI techniques.

This first functional version demonstrates the full workflow, from PDF ingestion to a graph representation in Neo4j.

Doc2KG favours a Human-Software Aided Craftinng approach, prioritising accuracy and human oversight over the speed of Automated Chunking and Entyt Extraction to produce higher-quality outputs.

Why Doc2KG?

Many important documents—standards, regulatory guidance, technical manuals—contain rich semantic structure and relationships. However, once published as PDFs, that structure becomes difficult to reuse programmatically.

Doc2KG addresses this problem by transforming documents into a machine-readable graph structure while preserving the meaning and hierarchy of the original content.

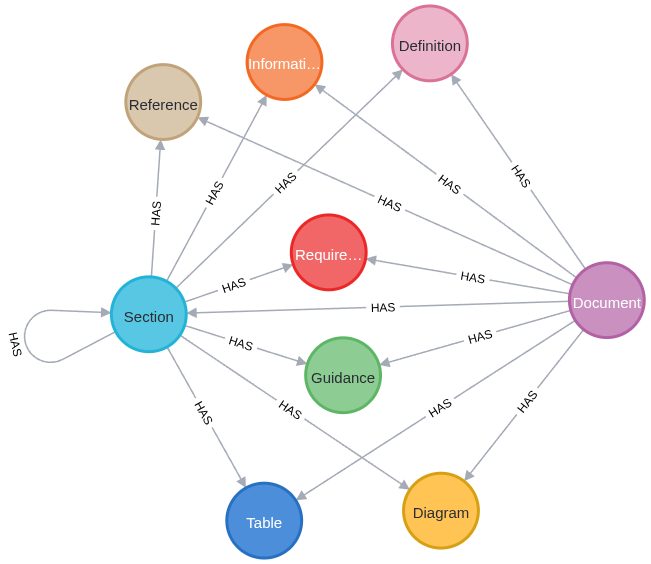

The goal is not simply to extract text, but to capture semantic relationships between document elements, such as:

- a. sections and subsections

- b. requirements and guidance

- c. definitions

- d. references to other documents

Representing these relationships as a knowledge graph enables new possibilities for search, traceability, compliance analysis, and AI-assisted reasoning.

A Human-Guided Pipeline

One of the key design principles of Doc2KG is that document conversion is not fully automated.

Instead, the system follows a human-guided, computer-assisted workflow:

1. Document ingestion A PDF is uploaded or downloaded from a source URL.

2. Text and page extraction The system extracts raw text and generates page images.

3. Range selection Users define which regions of pages should be processed, allowing headers, footers, and other boilerplate content to be excluded. This ensures that complex booklet and multi-columns layouts are extracted correctly.

4. Manual structuring

The extracted text is organised into labelled blocks such as Section, Requirement, Information, Definition etc.

5. Graph generation These blocks are converted into nodes and relationships in a Neo4j knowledge graph.

This approach combines automation for repetitive processing with human judgement for semantic interpretation, which is essential when dealing with normative documents.

Current Architecture

The current implementation consists of several components working together:

- Doc2KG Backend (Node.js REST API)

- Neo4j for graph storage and querying

- MinIO for document and asset storage

- Local LLM via Ollama for metadata extraction and text embeddings

- A web workbench interface for document structuring

The backend handles document ingestion, extraction, graph generation, and embedding creation. The workbench provides the environment where users define document structure before generating the final knowledge graph.

Semantic Search and Embeddings

Each graph node can also receive a vector embedding generated by a local LLM.

This allows the knowledge graph to support semantic search, meaning queries can find relevant requirements or definitions based on meaning rather than exact wording.

Using a local Ollama service ensures that the entire pipeline can run without relying on external APIs.

What Works Now

The current version supports the complete pipeline:

- Upload or download a PDF document

- Extract text and page images

- Define extraction ranges

- Structure text into labelled blocks

- Generate a hierarchical knowledge graph

- Store nodes and relationships in Neo4j

- Generate optional embeddings for semantic search

In other words, the pipeline now works end-to-end.

What Comes Next

While the core pipeline is functional, there are several areas for further development:

- improving the editing and validation tools in the workbench

- enhancing automatic detection of document structure

- expanding graph analysis and querying capabilities

- integrating graph-based retrieval with LLM reasoning workflows

Ultimately, the aim is to make complex documents computable knowledge sources, enabling new ways to explore and analyse them.

Closing Thoughts

Building Doc2KG has been an interesting exploration at the intersection of document engineering, knowledge graphs, and AI.

Reaching a functional pipeline is an important step, but it is only the beginning. The real value will come from applying this approach to real-world document collections and exploring what new insights can emerge when documents become queryable knowledge structures.

If you are interested in knowledge graphs, document processing, or AI-assisted governance analysis, stay tuned—there is more to come. Please do not hesitate to contact me via my LinkedIN profile.